Highlights

In March, the White House released its legislative priorities for AI, which reaffirmed President Trump’s commitment to protecting “the four Cs:” 1) Children; 2) Creators; 3) Conservatives; and 4) Communities. While the administration’s AI framework provides high-level guidelines for implementing each of the four Cs, it charges Congress with addressing the details in legislation. To that end, Senator Marsha Blackburn’s (R-TN) draft legislation, the TRUMP AMERICA AI Act, is the most comprehensive AI legislation proposed to date.1

Contrary to some critics who have characterized it as a threat to American technological leadership, this legislation would create substantive guardrails to ensure that American AI leadership remains focused on its groundbreaking potential and is not compromised by Big Tech’s track record of harming kids, censoring speech, and rending America's social fabric.

“Instead of pushing AI amnesty, President Trump rightfully called on Congress to pass federal standards and protections to solve the patchwork of state laws that has hindered AI innovation,” Senator Blackburn said in a statement to IFS. “Now, Congress must answer the President’s call to establish one federal rulebook for AI to protect children, creators, conservatives, and communities, and ensure America triumphs over foreign adversaries in the global race for AI dominance."

Americans Want AI Guardrails

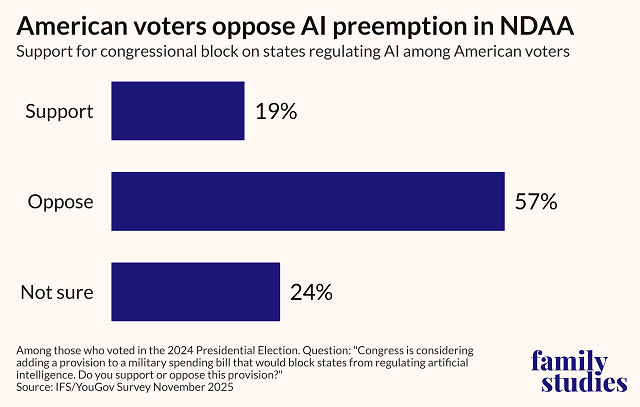

The TRUMP AMERICA AI Act not only provides an excellent vehicle for implementing the President’s AI framework, it also reflects the beliefs and desires of American voters. Polling by the Institute for Family Studies and other organizations shows that the U.S. electorate overwhelmingly supports placing strong guardrails on AI companies. For example, while industry has repeatedly called on Congress to block states from implementing commonsense laws to protect kids, workers, and families, Americans oppose preemption of state AI laws by a margin of 3 to 1. That includes a recent attempt by industry lobbyists to slip a preemption measure into the National Defense Authorization Act (NDAA).

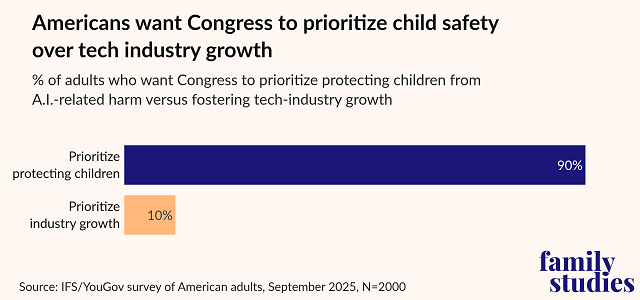

Not only that, when IFS asked American voters whether Congress should prioritize protecting kids from AI harms or advancing the AI industry’s growth, voters said that safeguarding kids should take precedence over industry growth by a margin of 9 to 1.

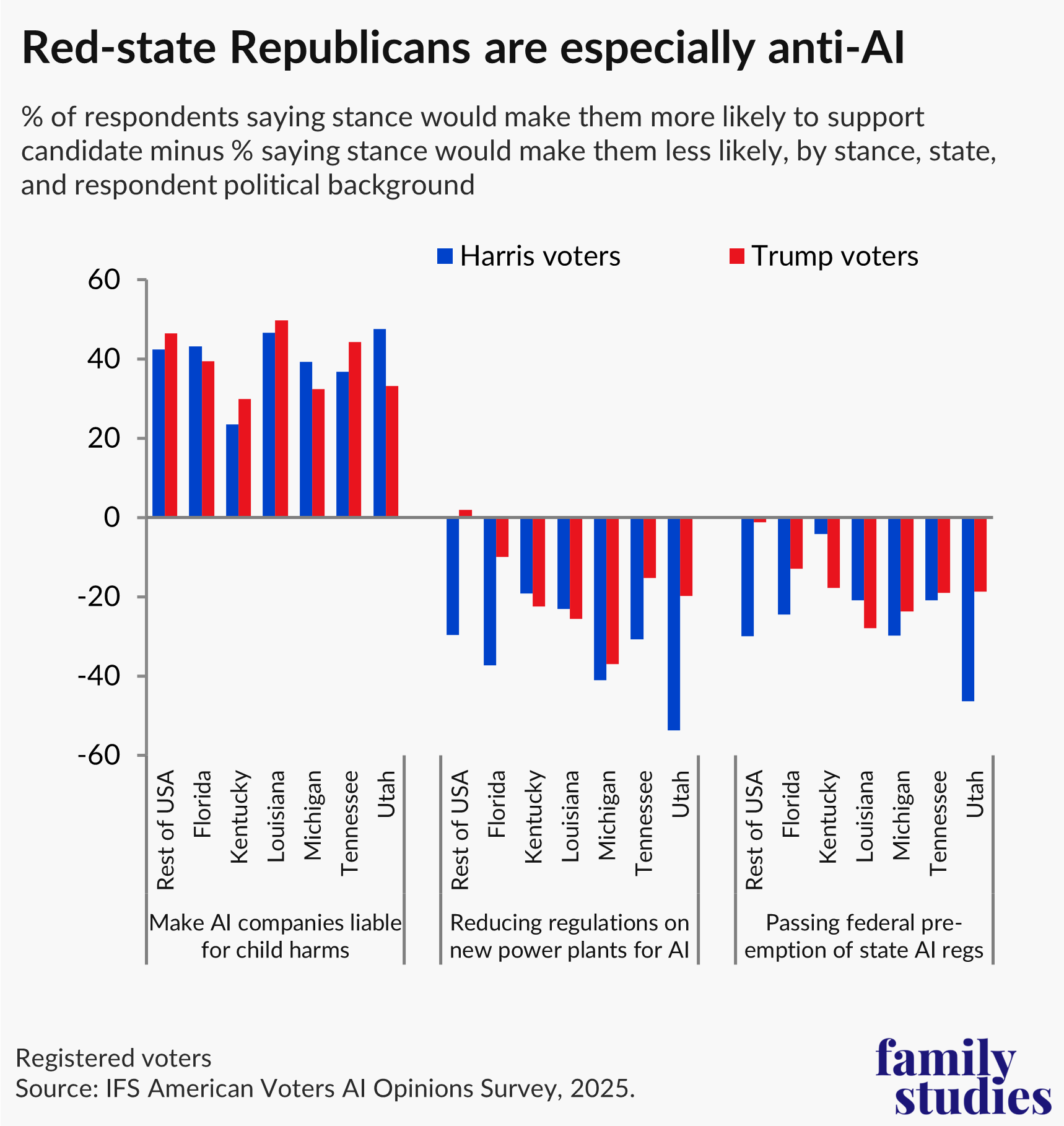

Despite what some tech industry lobbyists say, enacting commonsense AI guardrails is not a “liberal cause.” In a survey of 6,200 American voters, with oversamples in five red states, IFS found that AI is quickly becoming a kitchen table issue for a large slice of the American electorate. Trump voters in red states say that they are willing to switch their votes to candidates that want to safeguard children from AI systems and oppose industry carveouts like preemption and cutting red tape to open data centers that raise electricity costs.

Sen. Blackburn’s TRUMP AMERICA AI Act addresses the main concerns voiced by ordinary Americans on both the political left and right. It presently contains 17 titles, many of which are commonsense proposals that draw from existing legislation with wide bipartisan support. For example:

- Title III sunsets Section 230, something advocates on the center-right and left have called on Congress to do for years;

- Title IV incorporates language from the Kids’ Online Safety Act, which passed the U.S. Senate on a 91-to-3 vote in 2024;

- Title V incorporates language from the GUARD Act, bipartisan legislation introduced by Senator Josh Hawley (R-MO) in October 2025 which would place restrictions on AI chatbots and companions to protect children.

Many of the other provisions similarly incorporate commonsense ideas. These include: 1) tracking AI labor disruption; 2) creating a framework for frontier labs to report emergent model capabilities and implications for cyber security and societal risks; 3) requiring AI companies to disclose ideological biases in their models; and 4) codifying President Trump’s Ratepayer Protection Pledge to prevent American households from shouldering increased energy costs from AI datacenters.

Most importantly to IFS, Senator Blackburn’s TRUMP AMERICA AI Act provides an excellent starting point for AI rules of the road that prioritize American children, creators, conservatives, and communities. Far from being “partisan” or “controversial,” the provisions in this bill are overwhelmingly favored by the American public as well as by many lawmakers and advocates across the political spectrum.

Children

The legislation provides substantive, long overdue safeguards for minors interacting with social media and AI chatbots:

- Title IV incorporates language from the Kids’ Online Safety Act (KOSA) that would establish several critical safeguards, including a duty of care requiring internet platforms to minimize design features that encourage compulsive usage of their services or harm minors’ physical safety, mental health, or social welfare. Social media’s harmful impacts on children are well-known and the basis of a landmark April 2026 jury verdict against Meta and Google in California. Both companies were found liable for designing systems that contributed to kids’ compulsive usage of their products; and Meta was found liable by a separate jury in New Mexico for failing to implement safeguards recommended by its own employees to protect children from sexual exploitation and abuse.

- Title V incorporates language from the GUARD Act that would ban Big Tech companies from providing AI companions to minors, require chatbots to disclose their nonhuman status, and make it a criminal offense to knowingly make AI chatbots that generate sexual content or engage in sexualized conversations with minors. These protective measures are essential to prevent Big Tech companies like Meta and X from pushing AI “companions” and characters that engage minors in sexually. Grok has a “spicy mode” which engages users in sexual conversations, and Meta previously indicated in official documentation that sensual conversations between minors and its AI chatbots were “acceptable.” But the problem is not restricted to large internet platforms. According to a 2025 survey by Commonsense Media, 52% of teens regularly interact with AI “companions” and 33% of teens use AI chatbots for “social interaction and relationships.” That includes Character.AI, which recently settled a wrongful death lawsuit from the mother of Sewell Setzer III, a 14-year-old boy who took his own life after being groomed and manipulated for months by one of the company’s AI companions.

- Title VII would create a federal product liability standard for AI products, enabling the U.S. Attorney General, state attorneys general, or private parties to file suit against tech companies for harms caused by their AI systems under certain circumstances, including failure to warn about unreasonably dangerous or defective product design. This largely tracks with existing state tort and consumer protection laws, which are already being utilized by plaintiffs to hold Big Tech accountable, and would help streamline and clarify AI product liability.

Congress must answer the President’s call to establish one federal rulebook for AI to protect children, creators, conservatives, and communities, and ensure America triumphs over foreign adversaries in the global race for AI dominance.

Creators

The legislation also extends needed protections to artists and creative professionals for AI replicas of them or renditions of their works. Title XII, in particular, is modeled on Tennessee’s ELVIS Act, and would:

- Hold persons and companies liable if they distribute AI-generated copies of an artist’s voice or visual likeness without permission;

- Impose liability on platforms that host an artist’s digital likeness, if the platform has knowledge that the likeness was generated or distributed without the artist’s permission.

Conservatives

The legislation delivers on meaningful standards and guardrails to hold Big Tech accountable for years of ideological bias and censorship. In particular:

- Title III sunsets Section 230 of the Communications Decency Act over a two-year period, forcing industry to the table and giving congress a hard deadline to reform Big Tech’s liability shield. Section 230 has allowed Big Tech companies like TikTok, Meta, X, and Snapchat to skirt lawsuits for harming kids and censoring speech. This no-holds-bar reading has, as U.S. Federal Judge Paul Matey complained in 2024, “become popular among a host of purveyors of pornography, self-mutilation, and exploitation, one that smuggles constitutional conceptions of a ‘free trade in ideas’ into a digital ‘cauldron of illicit loves’ that leap and boil with no oversight, no accountability, no remedy.” Even U.S. Senator Rand Paul, long a staunch proponent of Section 230, penned a lengthy op-ed in 2025 describing the many censorious campaigns waged against him by Google and Meta, which convinced him to favor scrapping the liability shield.

- Title VIII requires third-party audits of AI systems to identify ideological bias and censorship. Big Tech’s manipulation of public discourse is a longstanding problem that has disproportionately affected pro-family groups and conservative voices. Research from tech watchdog groups, Media Research Center and America’s Digital Shield, revealed some of the disturbing ways that Google and other Big Tech firms use their AI algorithms to influence U.S. elections, most frequently to the advantage of liberal politicians and causes. Now, as increasing numbers of Americans turn to AI chatbots for guidance on voting, politics, and significant life decisions, hidden ideological biases in AI models could further threaten American family values and republican self-governance.

Communities

The legislation creates transparency mechanisms for tracking AI employment disruption and shields households from rising electricity bills from datacenters. Specifically:

- Title II requires covered enterprises and federal agencies to report AI-related job displacement to the Department of Labor (DOL) on a quarterly basis and further requires DOL to report that data to Congress and the public. This reporting mechanism is important for guiding federal action and resources intended to address labor disruptions from AI.

- Title XI requires datacenter operators to cover higher electricity costs driven by increased power demand from their facilities as a condition of receiving federal incentives. According to one report, average electricity prices in the U.S. rose to 19 cents per kWh in 2025 largely due to increased demand from datacenters. At the same time, states with a high concentration of data centers, like Virginia, have seen electricity rates skyrocket by as much to 267% over the last five years.

The TRUMP AMERICA AI Act discussion draft provides a strong foundation to build upon and improve. It protects American families, incorporates the most commonsense and bipartisan Congressional legislation on AI, and meaningfully advances the President’s vision for American technological leadership.

Daniel Cochrane is Senior Fellow for the IFS Family First Technology Initiative. Michael Toscano is Senior Fellow and Director of the Family First Technology Initiative for the Institute for Family Studies.

1. Full title: “Republic Unifying Meritocratic Performance Advancing Machine Intelligence by Eliminating Regulatory Interstate Chaos Across American Industry Act”