Highlights

The White House has recently switched gears from opposing pre-deployment testing of frontier AI models to considering mandatory testing for model safety before such models are released to the public. News broke in April that the White House is considering issuing an executive order to establish a formal government review process to evaluate whether new AI models meet certain safety standards. Kevin Hassett, the director of the National Economic Council, compared the prospective vetting process to the evaluation mechanisms of the Food and Drug Administration, saying that “[AIs] should go through a process so that they’re released to the wild after they’ve been proven safe, just like an FDA drug.”

The Institute for Family Studies has conducted five previous polls on how the public, and voters in particular (including Trump voters), feel about AI, as well as AI policy. Our polls have ranged in subject matter from public feelings about AI companions to the strong opposition of voters to blocking states from regulating AI. In sum, this research forced us to conclude that,

our results range from cases where the public overwhelmingly opposes the interests and arguments of the AI industry, to cases where it is at best ambivalent towards them. This can be seen as evidence against the viability of AI accelerationism as a salient political force in the United States.

Having examined the politics of AI closely, we wondered how voters would feel about the White House’s new emphasis on ensuring that AI systems are safe for society, children, and families. To test these policy ideas, we fielded a poll on May 7 with YouGov with a sample of 1,000 Americans nationwide.

Our hypothesis was that voters would be supportive of this policy, and that even Democratic voters, despite strongly disfavoring the White House, would also be supportive. We also wanted to understand for whom voters thought safety was important. We hypothesized that voters would be strongly supportive of efforts to ensure AI systems are safe for national security purposes, but we wondered would they also want AI systems to be evaluated for safety to kids, families, and other constituencies?

According to our results, the answer is resoundingly yes. Here are our findings.

The Results

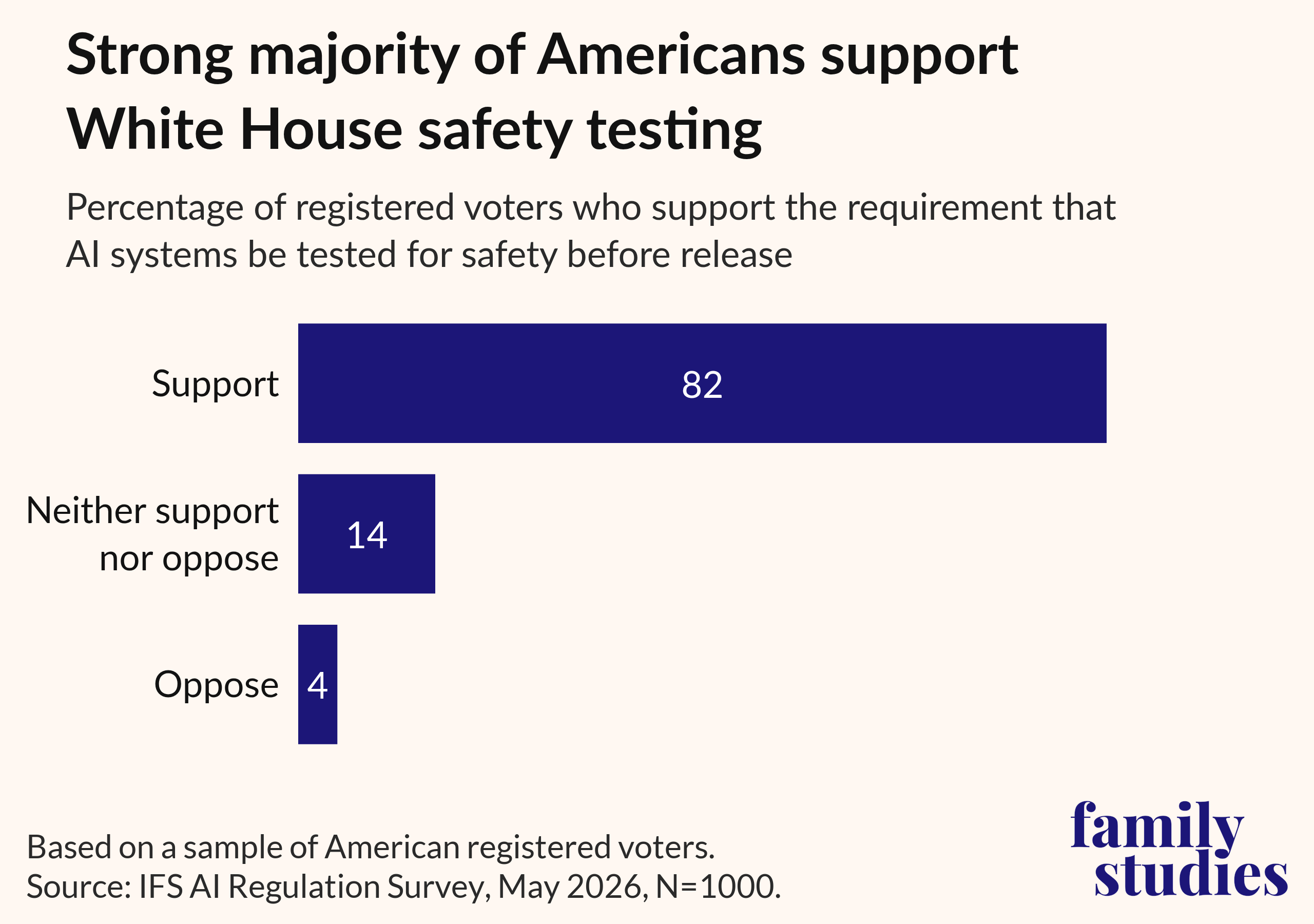

First, 82% of American voters support the White House’s proposal to vet AI systems for safety, and only 4% oppose such a measure, with 14% being neutral. That is to say, Americans support the White House’s pivot to establish safety procedures for AI by a margin of 20 to 1.

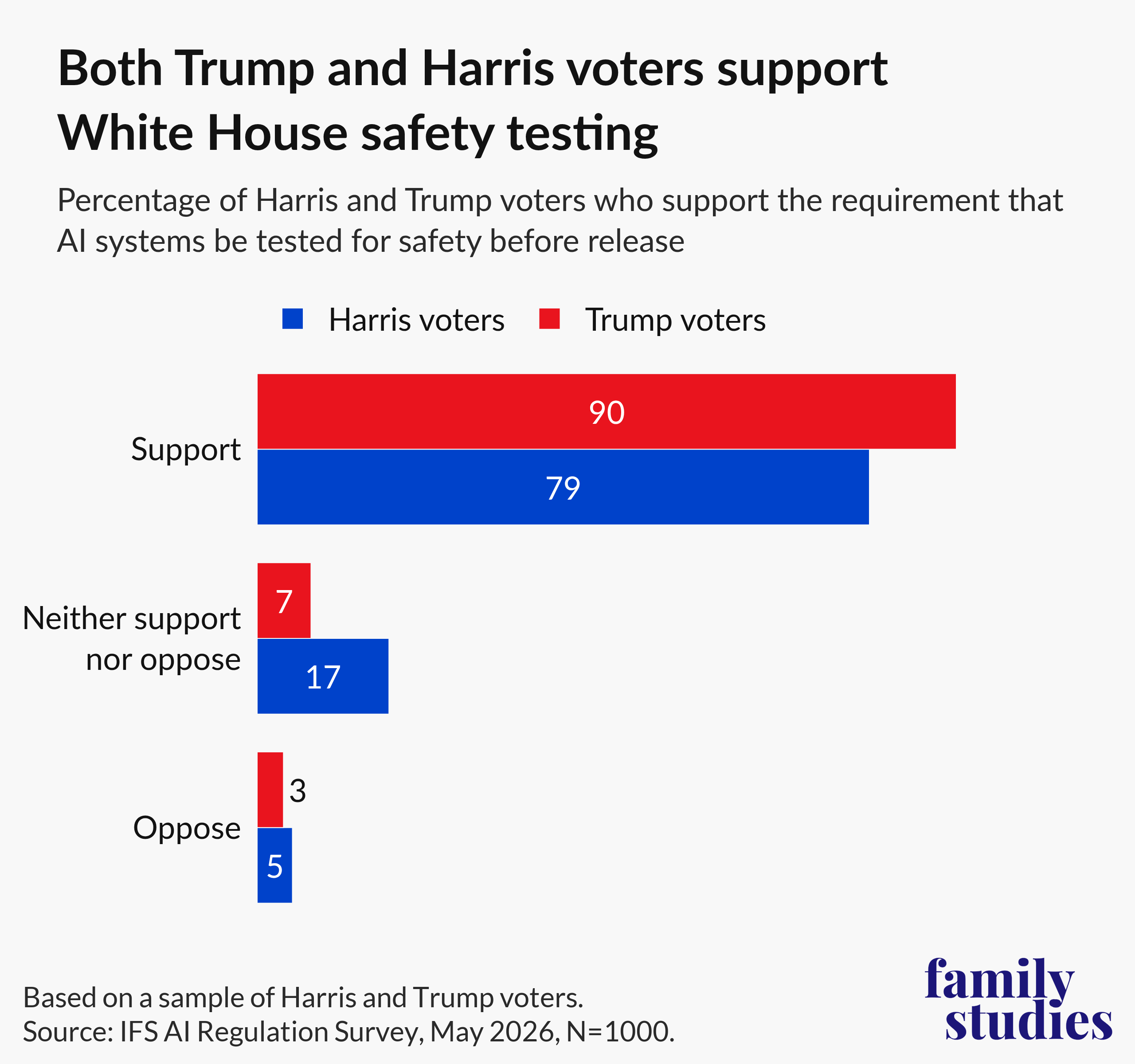

Our previous polling has repeatedly found bipartisan opposition to the White House’s policy of blocking states from regulating AI. But, for evaluating AI systems for safety, the results are reversed. We find strong bipartisan support for this policy in our new poll: 90% of Trump voters support pre-deployment vetting of AI models, compared with only 3% who oppose it. We assumed that Harris voters would be somewhat less likely to support this policy (purely based on its emanation from the Trump White House). Despite that, 79% of Harris voters still support this measure, while a mere 5% oppose it. In other words, despite the polarization of American politics, the White House’s proposal of evaluating AI for safety prior to release to the public is extremely popular across the board.

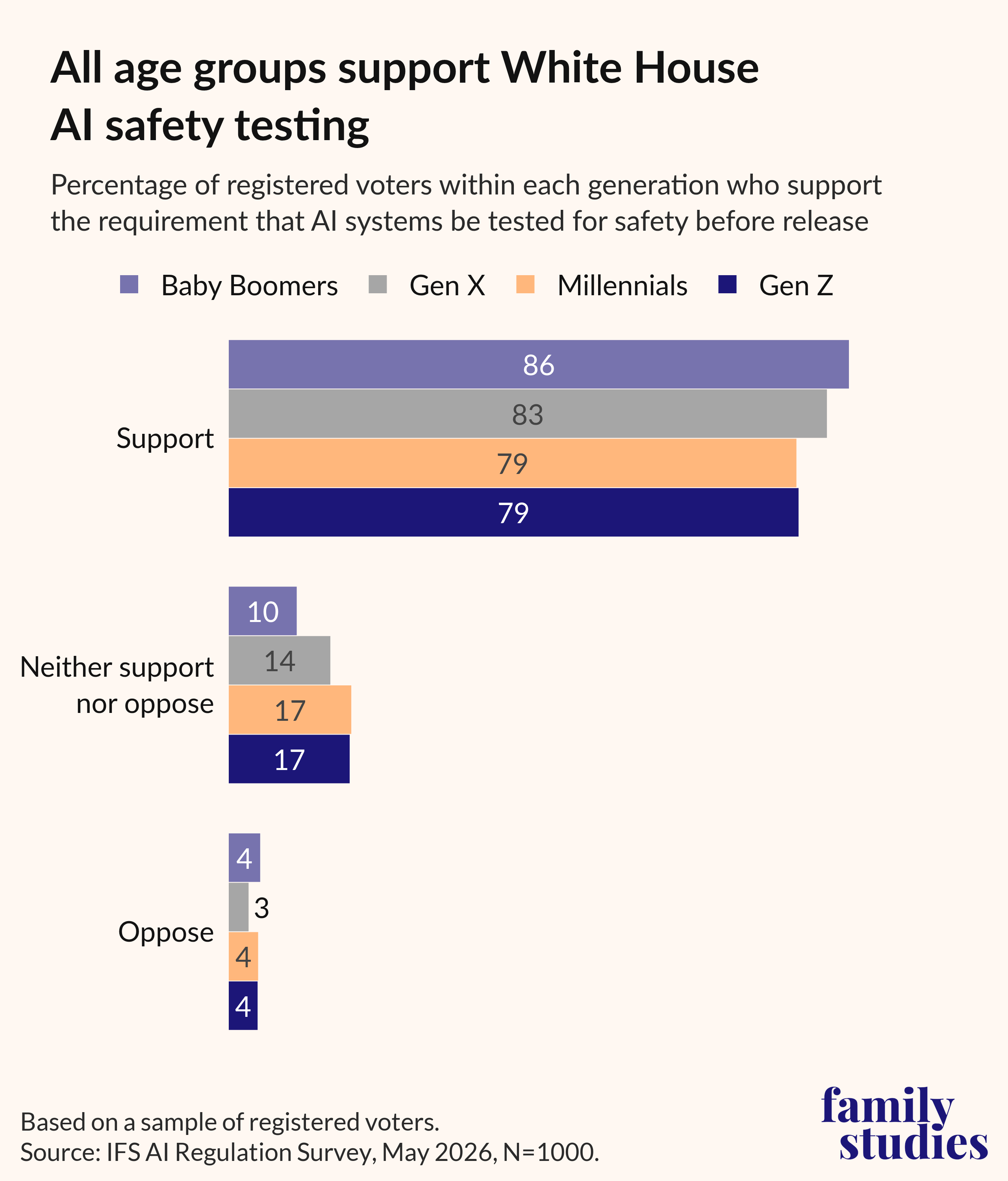

We also find that the measure is popular across all age groups, with vast majorities in every generation supporting mandatory safety evaluations.1

Safety for Whom?

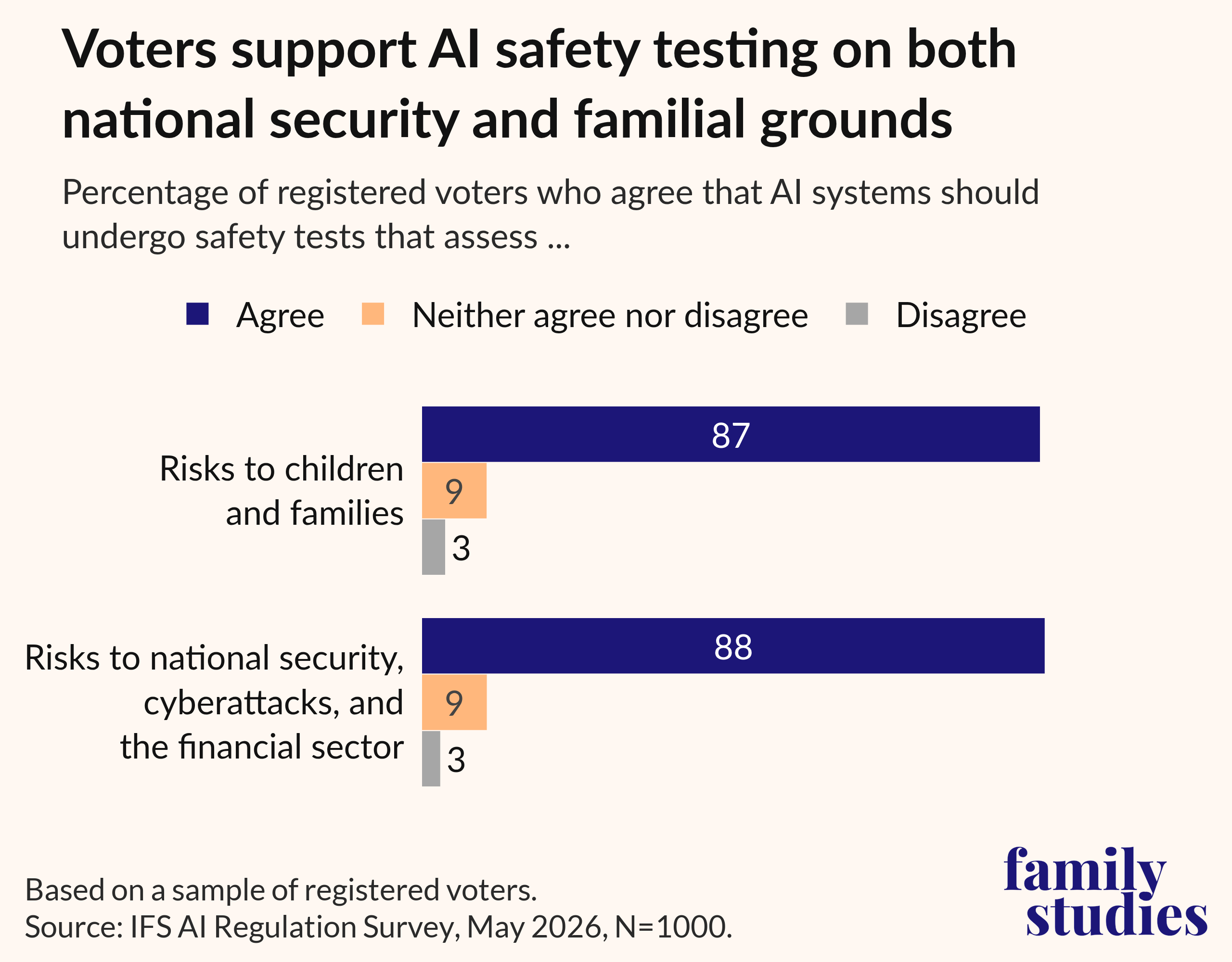

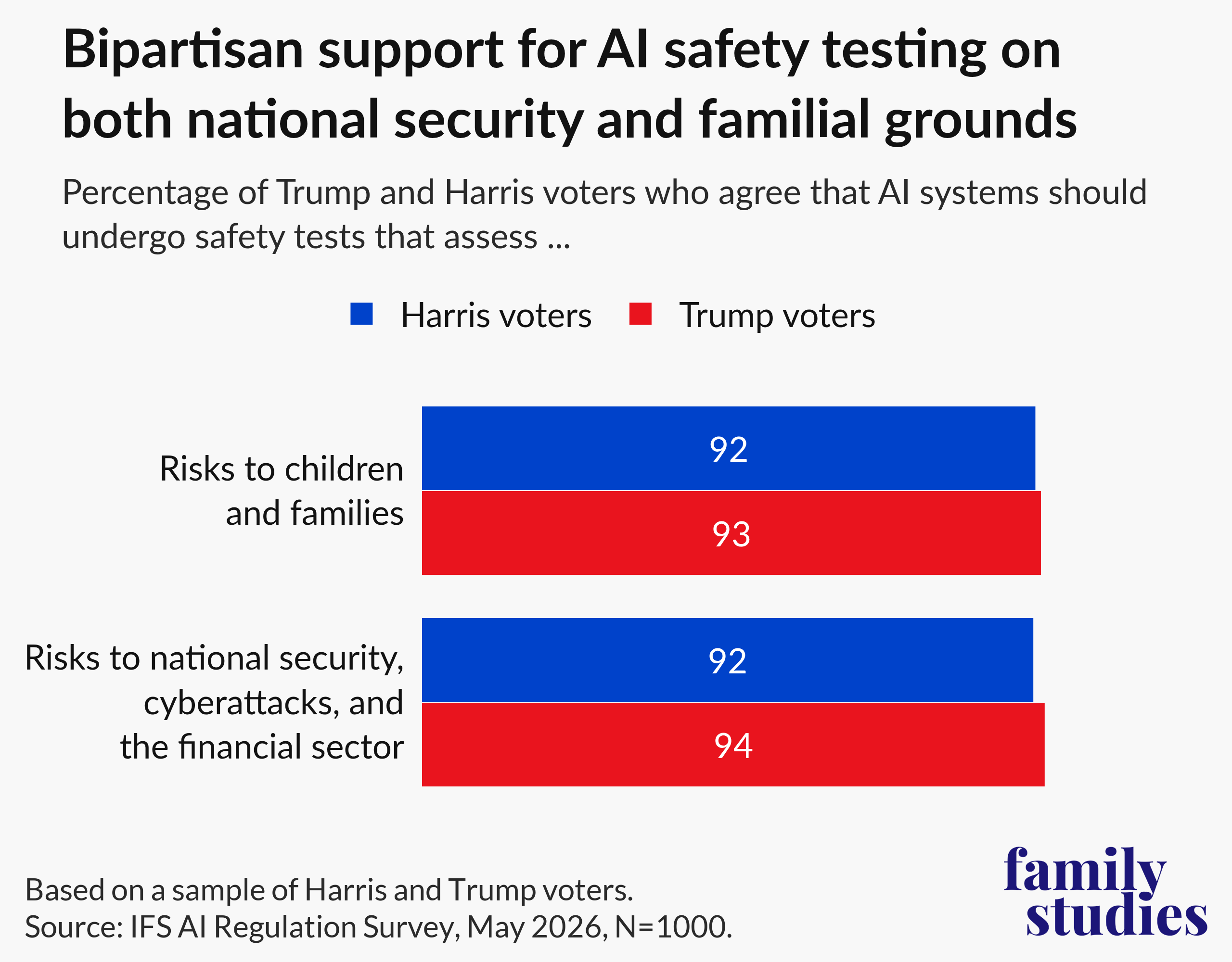

A question that often goes unasked is who deserves protection against AI harms? Or, rather, the assumption, at least among AI experts and policy elites, is that safety evaluations must concentrate on threats to national security, the financial sector, and cyber security. Do American voters agree with policy experts that this should be a priority? Our hypothesis was that the answer would be yes, but we also wondered if Americans would want the White House to evaluate AI systems for safety to children and families prior to their public release?

The answer in both cases, on national security and child and family safety, is a resounding yes. By a landslide, American voters want AI systems to be evaluated for national security (88%) as well as for the effect they will have on the well-being of children and families (87%).

We see that support for national security evaluations is effectively the same for family impact evaluations, but the latter is not typically the primary focus for AI safety experts; and so, downstream, it would also be unfamiliar to the voting public. Therefore, the strong support by voters for the White House evaluating AI systems for child safety is the more interesting of the two and potentially the more organic.

This paired question was not framed as a White House policy, so that omission, we anticipated, would make the support of Harris voters even stronger. That bore out in our results. Trump and Harris voters equally support these measures, i.e., safety testing for national security and family well-being, in overwhelming numbers.

In sum, American voters strongly support the policy of evaluating AI systems for safety for national security, but they also take a wider view of who it is important to keep safe. Both Trump and Harris voters strongly support testing AI to ensure that children and families are kept safe.

What Harms Matter?

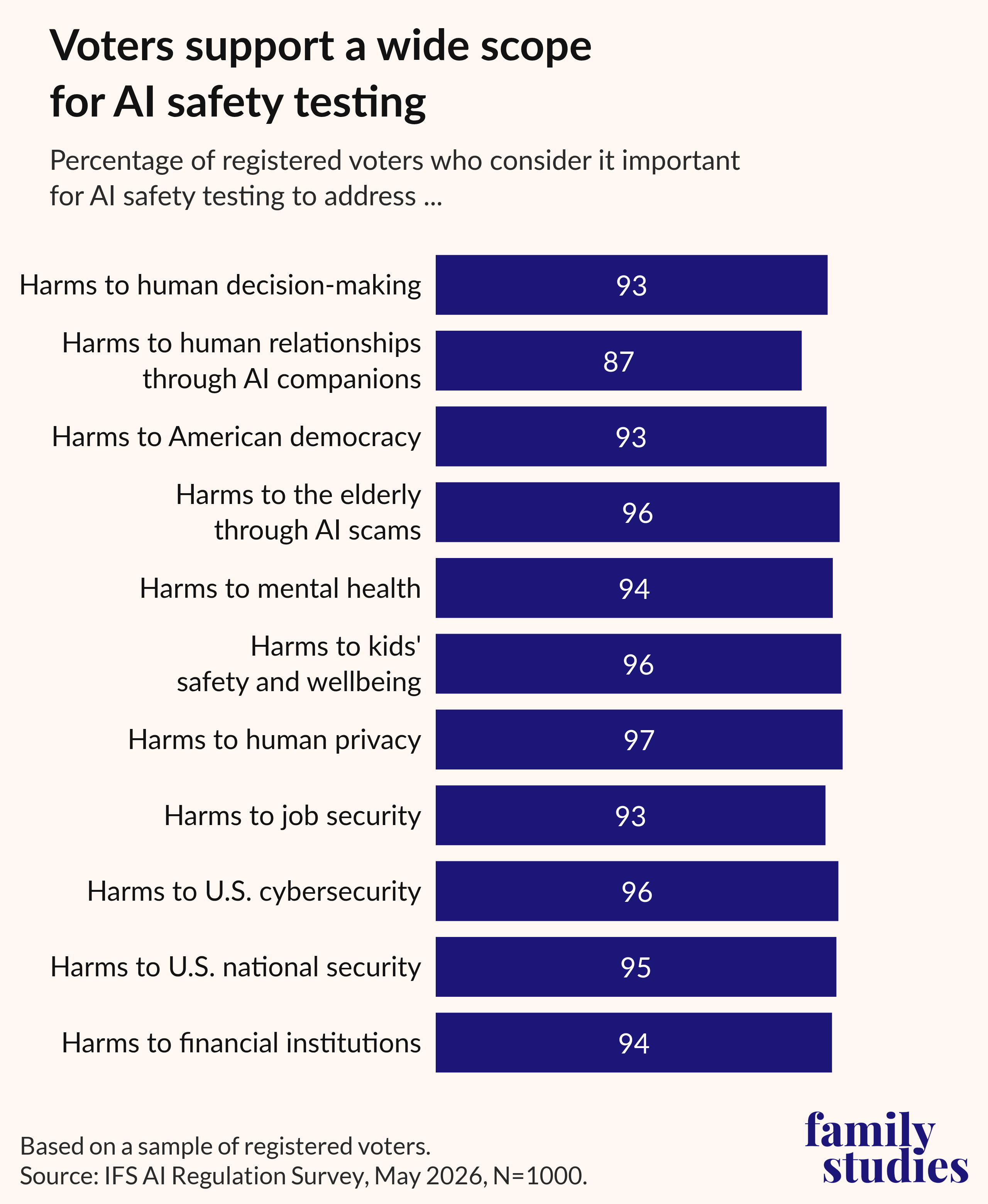

For our final question for this survey, we wanted more detail on the types of harms for which voters want AI systems evaluated. To that end, we offered 11 categories of harms, ranging from harms to national security, mental health, the elderly, human privacy and human decision-making through AI scams (see the figure below for a full list). We also randomized them so as not to bias respondents by any invisible patterns of presentation.

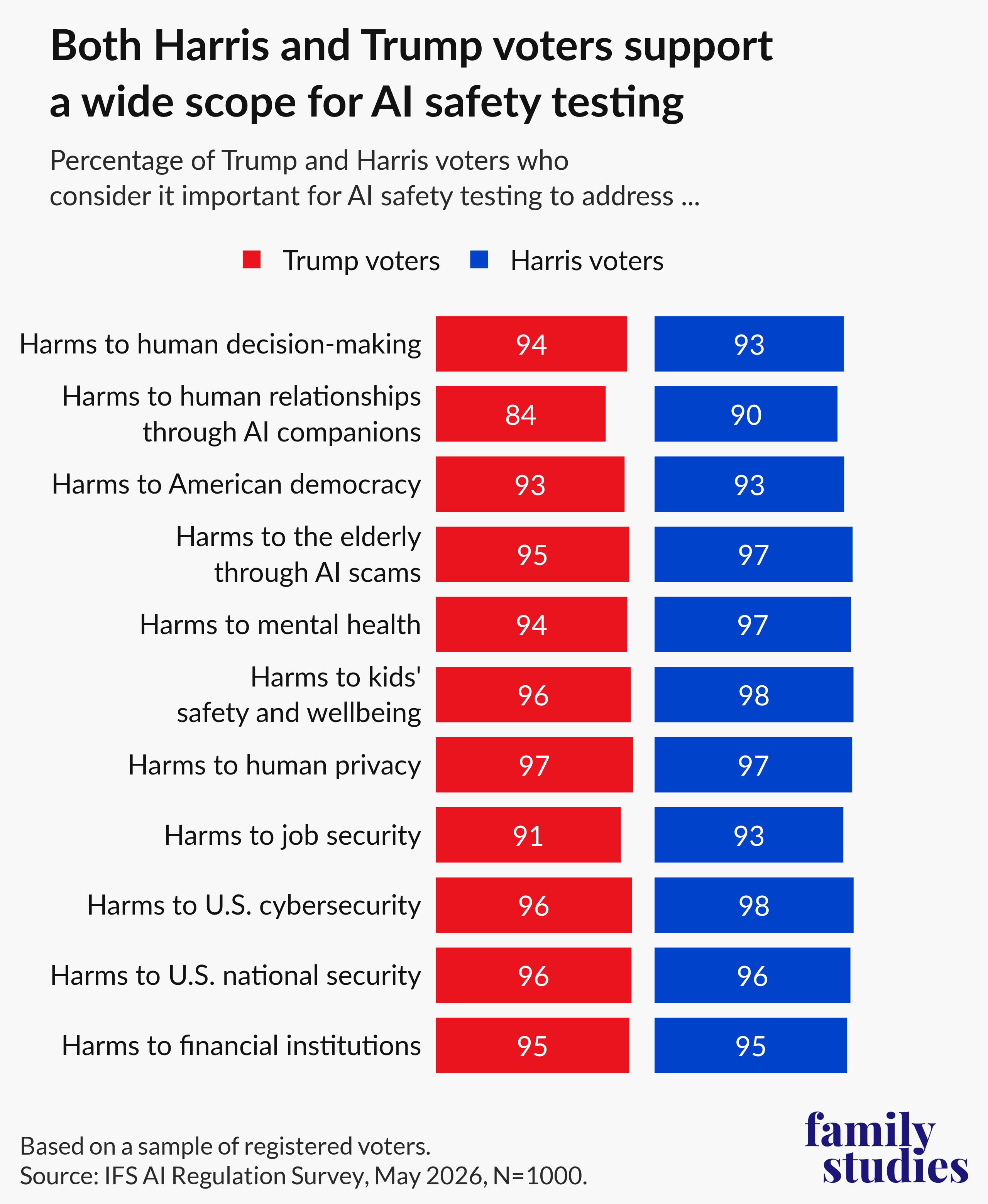

Again, our hypothesis for this question was that voters would strongly agree with harms to national security, cybersecurity, and financial institutions being important—and that hypothesis turned out to be correct. But, as mentioned, having previously conducted five polls on AI, we understand that the public tends to have a much wider vision for AI policy and regulation than does the policy establishment in Washington. So, we anticipated that we would find majority support for pre-evaluations of all 11 harms. That is exactly what we find.

As can be seen, Americans are widely supportive of efforts to pre-evaluate AI for safety and for keeping AI from inflicting harms. Strong majorities of voters support evaluating AI systems for each and every listed harm. To put the matter frankly, this puts the American voter out of sync with the policy establishment, which tends to be more motivated to protect elite institutions than these other concerns that it does not consider “critical.” But voters see things differently—and clearly believe both are critical.

To that point, once again we see that support for vetting AI systems against these 11 harms is bipartisan.

Conclusion

In sum, unlike the White House’s policy to block states from regulating AI (e.g., the 10-year moratorium), which was overwhelmingly unpopular with Trump and Harris voters, this policy receives strong support from American voters on both sides of the aisle. By implementing a policy to vet AI systems for safety prior to deployment, the White House would receive strong approval.

Importantly, American voters, including Trump voters, want to see a wider array of safety evaluations applied to AI than merely national security, as important as that is. In fact, these numbers suggest that Americans want the administration to think even bigger about these issues.

Why might that be the case? Unlike preemption—i.e., a White House policy that would diminish the safety of American kids, families, and the elderly—AI safety testing would proactively protect Americans from harmful uses of Artificial Intelligence. For reasons which should be obvious, Americans support such a pivot.

Michael Toscano is Senior Fellow, Director of the Family First Technology Initiative for the Institute for Family Studies. Ken Burchfiel is a Research Fellow at the Institute for Family Studies. Daniel Cochrane is Senior Fellow for the IFS Family First Technology Initiative.

1. Respondents were categorized as Baby Boomers if they were born between 1946 and 1964; as Gen Xers if they were born between 1965 and 1980; as Millennials if they were born between 1981 and 1996; and as Gen Zers if they were born between 1997 and 2012. This categorization was based on this visualization. Because we only had 26 registered voters in our sample who belong to the Silent Generation (1928-1945), we chose not to incorporate this group into our graph.